Imagine you're at the helm of a data-driven organization. You understand the critical role integration services play in your daily operations. They're the invisible gears that extract, transform, and load data from different corners of your organization into one cohesive system. For years, you've relied on Microsoft's Integration Services (SSIS) to make this happen. But lately, the buzz around cloud computing has piqued your interest and you're contemplating moving your trusty integration services to the cloud.

This is where Azure Data Factory comes into play. With Azure Data Factory you can craft, schedule and oversee data pipelines effortlessly. It's like having a scalable and cost-efficient data moving service at your fingertips, connecting your on-premises and cloud-based data sources seamlessly.

Transitioning your data processes to the cloud is a significant decision and it's normal to feel overwhelmed. However, with careful planning, this shift can lead to enhanced efficiency and scalability.

To make this transition smoother, start by understanding your current integration landscape. Take inventory of your existing data sources, identify common data transformations, and assess if there's any custom code in play. This step will provide a solid foundation for a successful migration to the cloud.

Moving to manage data in modern way

Chart your Migration Course: now that you have a clear picture of your existing setup, it's time to plan your move to Azure Data Factory. Imagine you're planning a cross-country road trip. Identify the data sources you want to move to the cloud (your destinations), create a migration plan (your roadmap) and figure out the best way to get your data there safely.

Building Data Pipelines in your way: Azure Data Factory is like a canvas for your data artistry. You can either use its user-friendly drag-and-drop interface, which feels a bit like arranging puzzle pieces or if you're feeling adventurous, dive into the coding realm with Azure Data Factory's SDK. Picture yourself crafting a simple data pipeline using the drag-and-drop interface:

- Start with a blank canvas (create a new pipeline).

- Choose your source dataset (perhaps an on-premises SQL Server database).

- Select your destination dataset (maybe an Azure SQL Database).

- Add a copy activity to shuttle data from the source to the destination.

- Fine-tune the copy activity settings to make sure everything aligns, like a master chef perfecting a recipe.

Gains of transition

Keep an eye on things: your data pipelines are like living organisms, and they need attention. Azure Data Factory provides tools to monitor and alert you about any hiccups. Think of it as having a personal assistant who lets you know when something needs your attention.

Transitioning to Azure Data Factory offers a world of benefits, just like upgrading your car to a more efficient and eco-friendlier model. You gain scalability, save costs, and elevate your data integration game. By approaching this journey thoughtfully, you can make the shift to this cloud-based data integration service feel like a well-planned road trip, full of excitement and discovery.

Azure Data Factory in twoday

In twoday we are utilizing Azure Data Factory daily. New data related and reporting projects, are being implemented using Azure Data Factory as an initial orchestration and integration tool. Other clients willingly take the new road when it comes to Azure Data Factory and migrates their SSIS related packages into pipelines. Worth to mention that besides already mentioned features, we also taking into use Data Flow task which runs on Spark engine. This is a huge win when it comes to conditional data splits and performance.

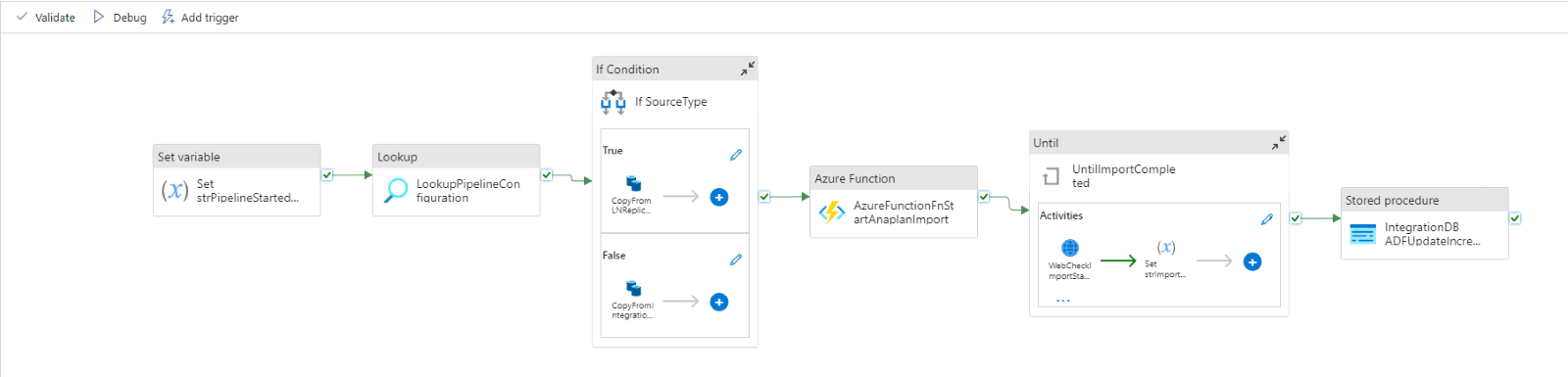

Here is one of the examples of pipeline structure:

The integration services are the core of all the data-driven organizations and Azure Data Factory is a one way to go for a more efficient and flexible data service. It could sound simple: asses your current setup, make a modernization plan and keep to it but in reality it is a much more difficult task. If done correctly, step after step you will start to see Azure Data Factory benefits.